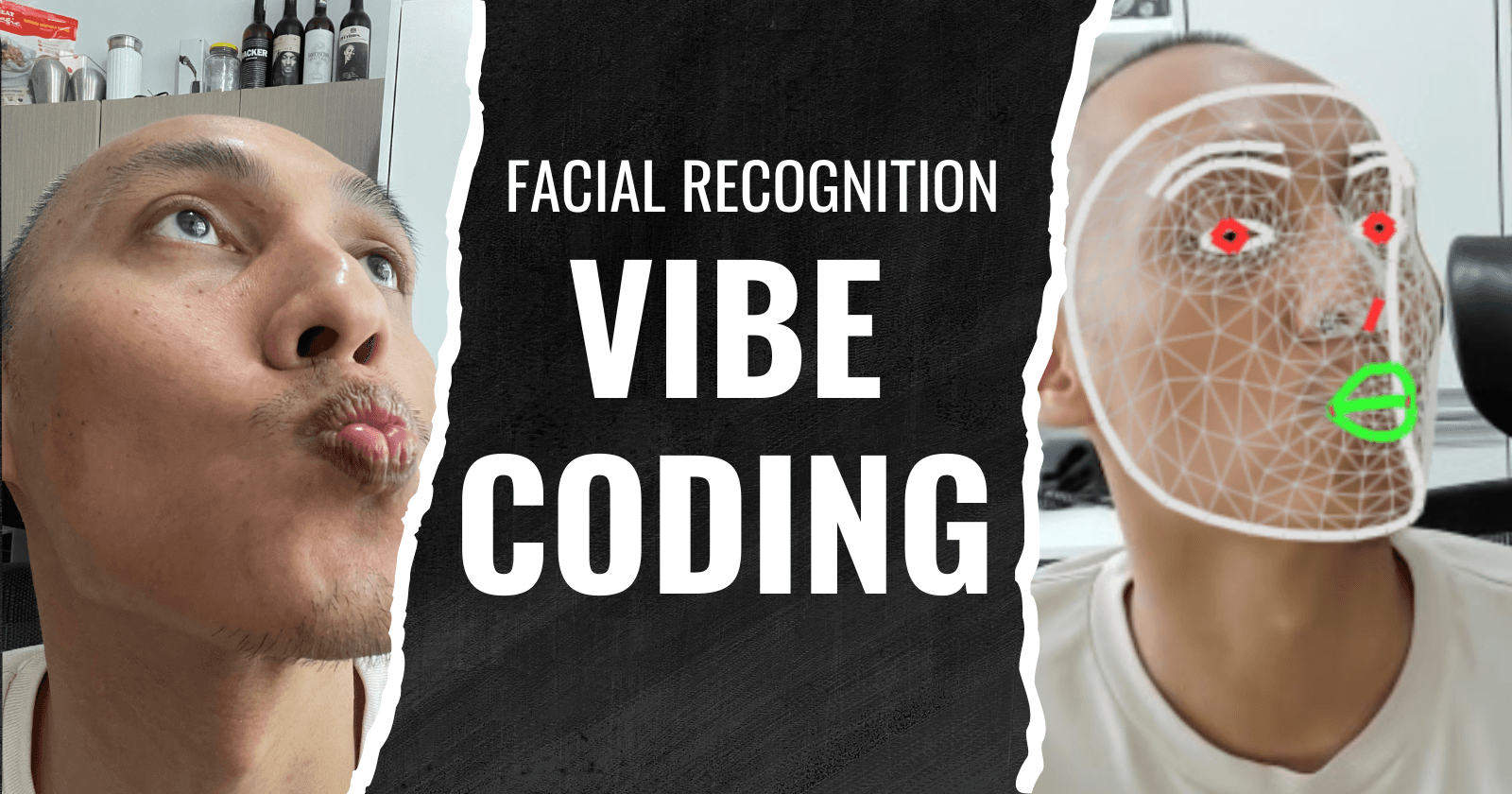

Building a Face-Controlled Cursor Using Filipino Gestures

Vibe Coding a Facial Recognition App with AI

Podcaster @ Kuya Dev Podcast Backend Tech Lead @ Prosple Founder @ Tech Career Shifter Philippines Community Leader @ freeCodeCamp.Manila Board Director @ ReactJS Philippines

Watch as I document my journey building a web app that lets you control a cursor using Filipino-style facial gestures - you know, that classic Filipino way of pointing with our lips! Using Google's MediaPipe for facial recognition and modern web technologies, I turned this quirky cultural gesture into an interactive experience.

What started as a planned 2-week project turned into a 5-day sprint thanks to some help from AI tools through vibe coding. The final app lets you move a cursor by turning your head while your lips are puckered - a uniquely Filipino interaction translated into code.

Key highlights:

Built with TypeScript and modern browser features

Uses Google's MediaPipe for facial recognition

Open source and available to try online

Mobile-friendly implementation

Adjustable sensitivity for different users

This fun experiment showcases how cultural elements can inspire tech projects while demonstrating modern web capabilities and AI-assisted development.

Full Video

Transcript

00:00-00:34 and here it is when I lower my face and it's pout the ball follows to turn on and turn off the facial recognition mesh it will be more fun without mesh I can really see the face how the ball follows what my heart is teaching So good!

00:35-00:52 I gave myself a challenge We will create a meme application during the whole Christmas break So I only have less than 2 weeks to create this Of course, there are days when we can't do it because it's Christmas, right?

00:52-00:57 There's Medianoche, Nochebuena, so less than two weeks.

00:57-00:59 But what do we need to learn first?

01:00-01:03 It's an application to control the mouse using Filipino gestures.

01:04-01:18 You know, Filipinos, when we're asked where this thing is, where the church is, for example, or where the mall is, we Filipinos, how do we teach? Using our lips.

01:18-01:22 So, we're teaching like that, right?

01:22-01:31 I thought that I could use that to actually create an application to control the mouse using the lips.

01:33-01:37 I hope we can do it. But first, we have to research.

01:38-01:43 I need to find out first, because I'm using a MacBook, if it can do it on MacBook.

01:44-01:47 Because the MacOS is a bit limited in terms of permissions.

01:48-01:51 So, it's not easy to control things in Mac.

01:51-01:54 And you still need to compile using Xcode.

01:55-01:55 So, hopefully, you can.

01:56-02:02 But if not, I have my backup laptop that's running Linux using Pop!OS.

02:03-02:05 So, maybe that's where I'll do it.

02:05-02:08 But let's first research if it's possible in Mac.

02:09-02:11 Secondly, is it possible in Python?

02:11-02:14 And of course, I don't want to build this from scratch.

02:14-02:20 We need to find similar projects or maybe we can make something similar.

02:22-02:35 Or maybe we can find similar applications or repositories that can be used in terms of facial recognition or hand gestures that we can modify to get what we want.

02:36-02:38 We have a lot of research to do today.

02:39-02:43 This is currently Sunday, December 22.

02:44-02:44 Wish me luck!

02:47-02:48 Okay, good news!

02:50-02:53 I'm looking for a library that we can use to actually do this.

02:54-02:57 Not in Python, but in JavaScript.

02:58-03:03 That changes the application a lot because I don't need to build a native application in the OS.

03:03-03:09 Instead, I'm now planning to build this as a web application.

03:10-03:19 And that way, it will be accessible to everyone on the internet, those who can access that website, instead of having to install it on their computers.

03:20-03:26 A huge downside of that is that originally, the plan is to actually control the mouse, right?

03:26-03:29 Which is an operating system function.

03:30-03:32 So we can't do it through a web application.

03:32-03:39 Instead, we will just create a cursor in our web application that we can control using the lips.

03:40-03:50 It's easier in a sense and we don't need to wrestle with the permissions of macOS and even Linux, right?

03:50-04:02 So, that's a waste. Now that I have my Linux laptop, I'm upgrading the system76 popOS OS, I won't be using it.

04:02-04:07 This is actually a faster way. I think this can be done in less than two weeks.

04:08-04:09 Even a week, hopefully.

04:10-04:13 I might underestimate the work that will be done. But it's a good start.

04:14-04:20 So, yeah. I will dive more into this. I'll start my repository. I'll build it.

04:20-04:26 And I will dive into the documentation of handsfree.js, which is the library that I'll be using.

04:39-04:44 So this is the demo of our initial version of our app.

04:45-04:52 It's simple actually. I didn't use any frontend framework.

04:52-04:57 We just used a simple HTML canvas.

04:58-05:02 Inside, we added a small ball of red color.

05:03-05:11 And at least for this initial version, the ball will just follow our mouse pointer.

05:12-05:16 So, there's a boundary, of course, it can't go out of the HTML canvas.

05:16-05:18 So, if the mouse goes over there, it's gone, it can't be followed.

05:19-05:25 But if it's inside the canvas, it will follow the mouse movement.

05:26-05:45 So, the next stage or the next step that we would need to do here is to actually wire up the facial recognition, one, and to attach that facial recognition, depending on where the user is facing, the ball should follow that.

05:46-05:49 If we are looking to the left, it should go to the left.

05:50-05:52 If we are looking to the right, it should go to the right.

05:53-05:55 And so on and so forth.

05:55-05:58 So, there. We now have an initial application.

05:59-06:00 The same logic only.

06:00-06:16 Music Okay, progress. Time check, 11:23 PM.

06:17-06:19 It's still December 22nd, right?

06:19-06:23 So, we're here. Our progress is huge.

06:24-06:55 We used a different library, instead of the one we mentioned earlier, handsfree.js I decided to use a library that Google provides The Google AI team They call it MediaPipe And here it is The face recognition is built-in And the landmarks that we need to measure The movement of the face So, what will happen now is we will change what we were thinking earlier.

06:56-07:09 So, instead of using the lips to actually signal where we are facing, where the ball is going, what we will do instead is use the nose.

07:12-07:51 So, it's easier because we only use one point on the nose, then, wherever we are facing, the ball will go there but, the ball will move when the lips are in the pouch even if we move our face, the ball should not move when the lips are not in the pouch but when the lips are in the pouch, we move like this like this the ball should move So, that's what we're going to do next.

07:51-08:01 Here it is. We've already got it. We've already detected the facial features, the landmarks on the face using Google's library.

08:03-08:06 Then, here it is. We're also showing it on the webcam.

08:07-08:15 What's left now is to do that computation for the ball to actually move in accordance to our face.

08:15-08:23 If we're wearing a coat and our nose moves according to the whole head.

08:24-08:29 So exciting. It's night already so we'll stop here and continue tomorrow.

08:29-08:40 When I was trying to break into tech, I had no roadmap, no mentor, and no idea if career shifting was even realistically possible.

08:41-08:49 I fumbled through it, made all the mistakes because I didn't have anyone to guide me.

08:50-09:09 Looking back, I wish someone had just said, "Here's what's happening, here's what to watch out for, and here's how to move forward." That's why I started Tech Roundup PH, my free weekly newsletter for aspiring Filipino techies and career shifters.

09:09-09:21 Every week, I break down what's happening in the Philippine tech scene, answer questions from subscribers, and share the kind of advice I wish I had when I was starting out.

09:21-09:24 Think of it as your weekly dose of mentorship.

09:25-09:32 And if you're into events, I've got you covered with a curated list of tech meetups and happenings around the country.

09:34-09:35 No more missing out.

09:36-09:39 Ready to grow your tech journey with a little help from Kuya Dev?

09:40-10:11 Sign up now at kuyadev.com/newsletter Alright, let's get back to the video Day 2, so we have a lot of progress yesterday We will just continue today, hopefully we will finish today We have a lot of walks later So I'm really hoping that before our walks, it's okay, something is working Then I will just plan it But before I proceed, I forgot to mention yesterday that our process was probably faster.

10:12-10:17 I was expecting this to take around two weeks of development.

10:18-10:20 But it was only the first day and I had a lot of work to do.

10:20-10:21 There was a lot of progress.

10:22-10:23 And that's just a few hours.

10:24-10:26 The secret is that I'm not a genius.

10:27-10:28 I'm not like that.

10:28-10:30 I use chat GPT. I use AI.

10:31-10:32 Admittedly.

10:32-10:39 But I didn't use it to copy-paste things. I used it for someone to talk to, to exchange ideas.

10:40-10:49 So I used ChatGPT to solidify the idea of the application I'm building and how to approach it.

10:49-11:10 During that conversation, when I realized that I can use the tongue as a point of reference, where the user is looking at the face instead of using the lips which would be more complicated because the lips move. The nose is not that much, it's just constant in relation to your face.

11:11-11:16 So instead of using the lips, we're using the nose as a point of reference where the user is looking.

11:17-11:25 Then the lips, he will just use it to actually measure if the lips of the user are like that.

11:25-11:31 So, it's really hard. If ChatGPT wasn't there, maybe I could have figured it out much later.

11:34-11:39 And it also gave some codes, of course. That's how ChatGPT is usually.

11:39-11:46 It gives the code, but the code is outdated. The library it used is actually old.

11:46-11:58 So, it gave me an idea that, "Oh, Google has this." So I did my research outside of ChatGPT, when I saw that Google has updated libraries that you can use.

11:59-12:07 And I ran into some problems using the updated or latest version of Google AI's library.

12:08-12:15 So apparently, their new version has some problems. I'm not sure what happened.

12:15-12:18 I don't like the drawing, I got some errors.

12:19-12:27 So I tried downgrading the version, one version lower, one version lower, one version lower, until I got the answer.

12:28-12:31 So this version works for my particular use case.

12:31-12:42 But I did that on my own, I didn't use ChatGPT because I think it doesn't have the latest versions of that software or library.

12:43-12:45 So I had to figure that out myself.

12:46-12:48 Fortunately, I have experience with this kind of thing.

12:49-12:57 There are times when there are open source libraries that if you get the latest version, it's usually broken.

12:57-13:02 So you have to go back a few versions to get the library that's okay.

13:03-13:06 So that's it. Let's go back to building the application.

13:15-14:14 support and my left corner of the lip so you can see the distance between them and you can see if the person is poking like this like that so you need to measure that how far is he closer more probable that the user is poking or kissing so we need to account the tilt of the head and also my distance from the camera.

14:15-14:19 So we need to account for all of these.

14:19-14:28 So it's hard to compute that without having a relative point of reference that doesn't change.

14:29-14:32 Unfortunately, people's faces are different.

14:32-14:38 There's no same size of lips, there's no same size of nose and eyes.

14:39-14:46 So I needed to figure out a good algorithm to make this a bit accurate.

14:47-14:58 So now, if you look at it, when I move away from the camera and get closer, the distance just hovered around 0.05-0.06-0.04.

15:00-15:05 It didn't happen before that it fluctuated wildly, the first iterations of the algorithm.

15:06-15:07 So now, it's a bit more stable.

15:09-15:14 So, if I've just pucker, you can see that I've just pucker on the console log.

15:18-15:20 There, it's already detected.

15:22-15:30 So, the next step is for me to implement the movement of the ball on top.

15:30-15:32 We're almost there, just a little more.

15:37-15:44 It's the day before Christmas and time check around 5:30 p.m.

15:45-15:47 Our project is almost done.

15:49-15:57 The puckering is working, the movement of the dot on our canvas is okay.

15:57-16:01 It's following the movement of the mouth and the face.

16:01-16:06 So, what I will do is to actually clean up everything.

16:07-16:13 I will put a button to disable the mesh that comes out of the user's face.

16:14-16:16 So that we can see, right? It's like a body, huh?

16:16-16:22 The user is moving like that and the dot is moving too.

16:23-16:24 So, it's just a little bit. I will just put it.

16:26-16:30 Then, maybe it will take a while to show and hide the webcam.

16:31-17:41 Whatever, whatever we'll get there I'm actually thinking if I will go further to develop a full application around it but maybe not anymore because my goal at the beginning was to build this to build an application that follows the dot where the heart is pointing so we achieved that So maybe in the next vacation or break, we can develop into something more An application or I don't know maybe a game? Not sure So we can't do that now because the day before Christmas, we will have to celebrate with my wife and tomorrow is Christmas day so there's no time to add more code to the application so maybe on the 26th, on the 2nd so I'll see you guys on the 2nd, on the 26th Bye!

17:42-17:49 One eternity later Date check, it's September 28th.

17:50-17:54 And I didn't do anything for the whole week. I didn't procrastinate.

17:55-17:59 And again, today is Saturday. I'm tired.

18:01-18:07 And Mysis is repacking rice for the whole week.

18:08-18:11 And Squid Game has also been released.

18:11-18:12 Season 2.

18:13-18:15 So here we go again, time to eat.

18:16-18:21 I'm hoping that I can add a little bit of coding later tonight.

18:22-18:24 Because that's just a little bit, I'll just add the buttons.

18:25-18:26 No promises though.

18:27-18:27 Yeah.

18:29-18:30 So wish me luck.

18:31-18:32 So we're in episode what?

18:33-18:33 Four?

18:33-18:33 Four.

18:34-18:35 Episode four of Squid Games.

18:36-18:40 And we'll watch that first and then I'll try to make it.

18:40-19:41 I'm so lazy right now, right? It's 4:30pm No, the sun is almost up Yeah, I haven't done anything for the day in the previous few days But yeah, I hope we can do something today Today is December 30 It is J-RIS Day And our plan didn't work out We finished the project last saturday because we finished Squid Games and it wasn't as good i think as season one but they made it into two seasons so it will be season two and season three and next year is season three but anyway again this is december 30 two days after yesterday my wife and i went to Manila to eat black watcha so i didn't have time yesterday but today i I actually finished it! Yay!

19:42-19:43 I finished it too.

19:44-19:44 It's here.

19:45-19:46 Let me show you.

19:49-19:56 And here is the final product, at least the version 1 of our Pinoy Gesture, facial recognition application.

19:57-19:58 And here it is.

19:59-20:07 When I close my eyes and put my hand on my face, the ball follows.

20:07-20:35 I also turned on and turned off the facial recognition mesh It's more fun without the mesh You can really see how the ball is following the direction of my head Sobrang galing!

20:36-20:40 And here, gumawa rin tayo ng parang threshold na slider.

20:41-20:48 Kasi hindi naman pare-pareho yung shape ng mga lips ng mga tao, pati yung size ng lips natin.

20:49-20:50 So, kailangan i-adjust siya.

20:51-20:53 Depende dun sa gumagamit, no?

20:53-21:01 I can't really predict kung ano yung size ng lips ng tao, so kayo nang bahala rito, no?

21:02-21:04 And this is now live!

21:04-21:08 I published it using GitHub, the GitHub pages.

21:09-21:17 And you will get or access the application using the link that I'll provide in the description box.

21:18-21:20 I'll just put it in the description if you want the link.

21:21-21:22 Go to this application.

21:23-21:29 I hope you'll enjoy playing and I hope you'll get a little laugh from it.

21:29-21:35 So allow me to explain my learnings during this journey.

21:35-21:47 But before that, I want to thank, shoutout to Joff Tiques of Open Source Software Philippines for sending me this package.

21:48-21:52 Inside, we have a t-shirt. It's a nice t-shirt. I want to wear it.

21:54-22:01 This one, LGTM from OSSPH.org So, thank you!

22:01-22:24 And there are other goodies here Actually, a notebook And of course, the bag So, thank you, Geoff And the rest of Open Source Software Philippines So, let's go back to what we were talking about What are my learnings in this project.

22:25-22:31 Of course, there are a lot of them because this is a facial recognition software that I did for the first time.

22:32-22:41 But despite that, with the use of AI, I was able to build this in much less time than I was expecting.

22:41-22:43 So I expected it to be two weeks long.

22:44-22:50 But with the use of AI, I was able to do it in just what? Less than five days.

22:51-23:08 And that's really amazing It allows me now to actually work on other stuff like celebrating Christmas, celebrating the New Year, going like yesterday, going on a trip to Intramuros. It saves me a lot of time.

23:09-23:16 And I think that's where AI really excels at, in helping us save a lot of time.

23:17-23:34 take back the time that would have otherwise been wasted working or debugging on a project that, "He's going to help us, he can help us, we can speed up the process." But still, the idea came from me.

23:35-23:38 The AI won't think for us to make those ideas.

23:39-23:44 But the conversation with the AI can be a way to polish your idea.

23:44-23:46 And that's where it really helped me.

23:46-23:51 Because I had this idea to use my lips that's like Pinoy, right?

23:51-23:56 To use the Pinoy gesture to move a certain object on the screen.

23:56-24:00 And with the help of AI, I was able to polish the algorithm.

24:01-24:06 Because my idea was that the only thing that would be a "bata" in movement is lips.

24:08-24:10 And it turned out I could use just the nose.

24:10-24:14 Because when I mentioned it in previous days, It's just like that. It's just the nose.

24:14-24:18 Because it's fixed in its position relative to the whole face.

24:19-24:21 Versus, for example, the lips that move a bit.

24:23-24:25 It moves like this.

24:25-24:33 That's a huge thing because it helped decrease the amount of time for me to have figured that out.

24:34-24:34 If AI wasn't there.

24:35-24:37 I would have figured it out. Probably.

24:38-24:41 Without AI, but I might have gotten a little bit of a shock before I got it.

24:41-24:49 Another learning here is modern browsers are so good because before, you still need a javascript bundler.

24:50-24:54 I used typescript here. I built this project with typescript.

24:55-24:59 Before, you would have to transform typescript into javascript.

25:00-25:05 In addition to that, you would have to compile everything into one javascript file.

25:05-25:38 because the older browsers can't load the JavaScript in pieces the files are separated, they need to be in one file only Modern browsers now can allow to import JavaScript files dynamically without having to use one file which means, the files are separated, you can upload those files As is, if there are some files in my repository, some of my JavaScript files, some modules, that's what it uploads.

25:39-25:41 Then, that's what it imports from the HTML file.

25:41-25:59 And then, when I have index.js, it imports index.js, it imports app.js, and app.js, it imports it in the rest of my JavaScript files that came from TypeScript, transpiled from TypeScript, then it imports it automatically from my JavaScript files.

26:00-26:00 It's really good!

26:02-26:06 Although it's only available for modern internet browsers.

26:07-26:08 I think it's been around since 2020.

26:10-26:14 I think almost all modern browsers can do this.

26:15-26:19 And I tried it on my iPhone 12 mini.

26:20-26:21 It has Safari.

26:22-26:23 I use Safari.

26:23-26:24 It's one of the latest technologies to adopt.

26:27-26:30 So even using Safari on mobile, it worked.

26:30-26:36 It was a bit weird because I didn't optimize it for mobile usage, but it was still working.

26:37-26:43 The mesh was a bit weird because it stretched, but still, it was working well.

26:43-26:58 And another thing that surprised me is, although I gave it a poker threshold here, The accuracy is really good in terms of following the ball in the movement of my face and the puckering threshold of my lips.

26:59-27:11 So the 0.04 that you will see set by default in the application is I think the average threshold for most people.

27:12-27:14 So just adjust it if it doesn't fit you.

27:15-27:21 You will see that if you increase the threshold, even if you are smiling like this, the ball will follow.

27:22-27:24 It's up to you to play with it.

27:24-27:28 Again, this is available online now via GitHub pages. The link is on the description.

27:29-27:32 And it's also usable using your mobile device.

27:33-27:36 And the code is open source.

27:37-27:45 Because Open Source Software Philippines gave us a goodie bag, we will give back to the community.

27:46-27:47 I've open sourced the code.

27:48-27:50 It is free for everyone to look at.

27:51-27:52 See how I did it.

27:53-27:53 Look at the algorithm.

27:55-27:56 And try to understand.

27:57-28:09 Another thing that maybe I can share you in this experience is when you build a project for your portfolio, It doesn't have to be something that's actually huge.

28:09-28:13 It would be better if it has real-world value.

28:13-28:16 I mean, it's actually solving a problem.

28:16-28:18 But this, it's like, it's nothing, right?

28:18-28:22 It's just something that I feel is fun for me.

28:22-28:28 I've learned a lot and I feel like other people will learn a lot here, especially since the project is open source.

28:29-28:36 If you're building a project for your portfolio like this, I don't know if I would pursue being an AI professional someday.

28:36-28:39 Not sure. I have no plans currently.

28:39-28:46 But in the future, if I need a portfolio as an AI engineer, as an aspiring AI engineer, I can use this.

28:47-28:54 I can create a simple project using AI or facial recognition.

28:55-28:57 So this would be useful for my portfolio.

28:58-29:02 So, even if it doesn't have real-world value, it's okay.

29:03-29:04 It's fun. It's just something you would enjoy.

29:05-29:12 And lastly, this is very important, especially for new developers, is to never reinvent the wheel.

29:13-29:17 There are many libraries and projects that were made there.

29:18-29:26 Like what I did, look for open source projects or open source libraries that you can use to achieve what you're trying to do.

29:27-29:41 Like for me, I know there are projects that enable facial recognition and the recognition of landmarks on the face.

29:41-29:44 I don't need to do that on my own.

29:44-30:24 I don't need to build an AI model or machine learning model and then train myself, look for data and all that jazz that I will reach the end of this project because all I just wanted was to create a simple application to follow my face that's all so yes, I could have done it myself I could have tried to learn how to build a facial recognition model and train that if that's what I want to learn What I wanted to explore here is, could I do this within a span of two weeks?

30:24-30:33 Can I create this meme of an app in just a set amount of time or less?

30:34-30:36 So I was able to prove that I can do it.

30:37-30:40 And there, I have a project that I can put in my portfolio.

30:40-30:43 Thank you for watching and I'll see you on the next project.

30:44-30:44 Bye!